PanoContext: A Whole-room 3D Context Model

for Panoramic Scene Understanding

Abstract

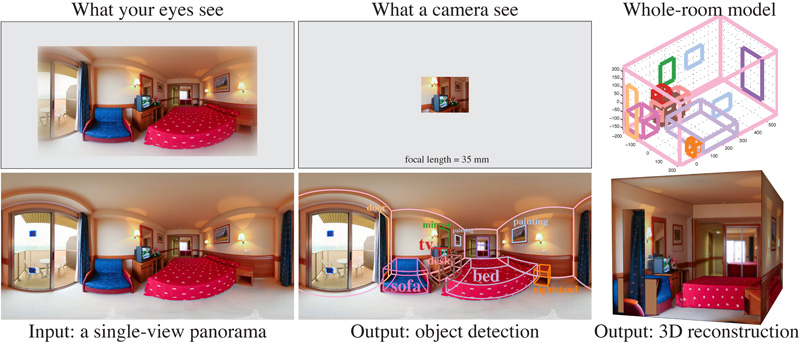

The field-of-view of standard cameras is very small, which is one of the main reasons that contextual information is not as useful as it should be for object detection. To overcome this limitation, we advocate the use of 360◦ full-view panoramas in scene understanding, and propose a whole-room context model in 3D. For an input panorama, our method outputs 3D bounding boxes of the room and all major objects inside, together with their semantic categories. Our method generates 3D hypotheses based on contextual constraints and ranks the hypotheses holistically, combining both bottom-up and top-down context information. To train our model, we construct an annotated panorama dataset and reconstruct the 3D model from single-view using manual annotation. Experiments show that solely based on 3D context without any image region category classifier, we can achieve a comparable performance with the state-of-the-art object detector. This demonstrates that when the FOV is large, context is as powerful as object appearance. All data and source code are available online.

Paper

-

Y. Zhang, S. Song, P. Tan, and J. Xiao.

PanoContext: A Whole-room 3D Context Model for Panoramic Scene Understanding.

Proceedings of the 13th European Conference on Computer Vision (ECCV2014)

Oral Presentation

Video

- Full video (8'23"): Long video including algorithm details.

- Spotlight (1'00"): 1 min video spotlight.

Talk

|

Oral presentation at the main conference: Keynote slides (409MB) and PDF slides (73MB). Video recording of the talk: http://videolectures.net/eccv2014_zhang_panoramic_scene/. |

Dataset and Source code

- panoContext_data.zip (4.4GB): This file contains 2D and 3D annotation on bedroom and living room panoramas.

- panoContext_code.zip (128.7MB): This file contains source codes of this project. We also provide a clean version of toolbox for basic panoramic image processing below.

Panoramic Image Processing Toolbox

- PanoBasic.zip (56.2MB): This file contains many useful function for panoramic image processing.

- PanoBasic_DemoFigures.zip (76.7MB): If you run PanoBasic demo correctly, you should be able to get exactly the same figures as in this file.

- panorama.pdf: This file contains an explanation of panorama geometry.

Poster

- panocontext_poster_eccv_final.pdf Poster for ECCV2014.

- poster.pdf Poster for CVPR SUNW workshop.

Annotated Panorama Dataset

Supplementary material

- supp.pdf: Due to page limit, we moved a lot of technical details to this file. This file also contains more visualized result of our method.

Algorithm Analysis

- theory.pdf: This file contains an analytical formulation of our model for people who wish to do theoretical study on our model.